The dataset provided in that example is the same between all 3 software's, taken with a low-quality raspberry pi 5mp camera. About 50 pics total including some with the shoe sitting on its side so the sole was visible. I took a group of pics today using my Canon 6d with turntable/lightbox setup.

PhotoCatch output (unedited, normal quality)ĭid a quick walk around the firepit area in my backyard recording 50 seconds of 4k 30fps video on my iphone 11 pro. One more quick comparison: Reality Capture vs PhotoCatch. This turns out much better results than a lidar scanner or even a dedicated consumer 3d scanner under 1k. I think it takes depth of field into account, possibly even using some of the AR kit functionality to make this somehow work so well. fully expecting a failure (none yet).and low and behold another impressive scan, from a quick video with crappy lighting, etc Chose the frame every 4 frames options and processed. This was a short video shot in 4k at 30fps. Not an easy item for any photogrammetry app to deal with.and it would require a bunch of photos. I was just working in my studio (glass lampworker) and decided to take a quick video of my torch, which is metal and has a bunch of shiny parts and intricate knobs and gas lines here and there. The more I play with this software the more amazed I am. (I WILL BE ADDING MORE RESULTS BELOW AS I PROCESS MORE VIDEOS/PHOTOS) The Macbook running the apple API barely gets warm processing these.the windows laptop fans are going crazy.ģdf Zephyr and RC run on a ASUS ROG Zephyrus g, ryzen 9 laptop I am also going to try using some photo sets from my DSLR setup with a turntable, often get poor/nonexistent results from 3df zephyr with those as well. I am going to be experimenting more with this.really curious how good of detail i can get with a video if i got in real close to the subject. Its really amazing how well their photogrammetry API works. Its pretty much flawless.somehow it was able to get every bit of detail in the model AND the texture even with the reflections and glossy surface. and in about 3 minutes i got this result ( didnt crop or modify the model in any way.just opened it in blender to view) So then i decided to load the same set of photos into PhotoCatchĬhose medium quality. It took about 7 minutes, but then i had to do a few more steps to get the textured mesh. Only showing the front because there is no back.the models left arm and entire back are fused into a giant white blob. here is my best result from 3df zephyr (paid version). Definitely poor conditions for photogrammetry but I was curious how the Apple API would handle it.īut first. slightly glossy, sitting on a high gloss surface, taken outside in sunlight on a back deck. I tried some photos of a little toy figure. I wanted to try using some photos that have given me problems in RC and 3df zephyr. So i have tried it with a couple other quick phone videos and it produces a good result every time, so far 100% success rate. (notice how it got all of the open areas including the inside of the shoe and throuh the laces etc.)

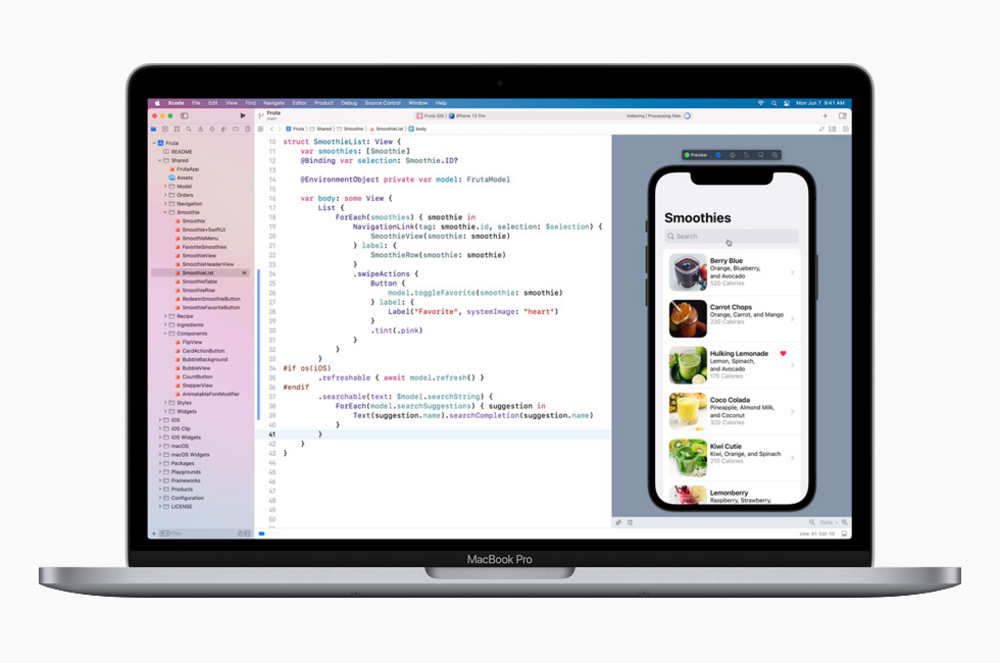

I clicked "Create Model".and in about 6 minutes I had a surprisingly good model of a shoe with great texture quality. In the app i chose the option of using a frame every 7 frames of video and chose medium quality. The video was 40 seconds long, 4k 60fps, iphone 11 pro. I thought.hmm lets see how good it can do with a shitty little iphone video of a shoe (shot on kitchen counter with iphone flash as light source). when you open the app you are given the option of adding a folder full of images or using a video. If you have a mac and are willing to upgrade to Monterey Beta.you can download this new application called PhotoCatch which uses the new photogrammetry API apple has made available to developers.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed